An AI Music Generator should not be judged only by the most impressive track it can produce under ideal conditions. That kind of judgment is tempting, but it can be misleading. The real test begins when you ask the same platform to help with different creative situations: a short social video, a podcast intro, a brand background track, a lyric-based song idea, or a fast instrumental draft. That is when a tool’s real character becomes visible.

I approached this comparison from the perspective of a working creator rather than a music technology researcher. I wanted to know which platform felt most useful after the novelty had passed. The test included ToMusic, Suno, Udio, Soundraw, AIVA, and Mubert. Instead of focusing only on whether one song sounded impressive, I scored each platform across output quality, loading speed, ad pressure, update rhythm, and interface cleanliness.

The result surprised me slightly. I expected the loudest or most famous tools to dominate the experience. Instead, ToMusic came out first because it felt more consistently usable across the categories that matter during repeat work. It did not need to be the most dramatic platform in every single moment. It simply created the least resistance between idea, generation, review, and continued exploration.

Why First Impressions Can Mislead Creators

AI music has become very good at producing moments that feel impressive. A strong vocal line, a cinematic intro, or a surprisingly polished chorus can make a platform feel powerful. But music creation is not only about isolated moments. It is about control, revision, fit, and repeatability.

A creator rarely needs just one generated file. More often, the creator needs options. They may need a version with a softer mood, a faster beat, less vocal presence, a different genre, or a clearer lyrical structure. If the tool makes those revisions difficult, then the first impressive output matters less.

The Best Tool Supports Creative Rhythm

Creative rhythm is the hidden factor in AI music work. When the interface is clean and the generation path is easy to understand, users are more willing to experiment. When the page feels cluttered, slow, or overly promotional, the user’s attention moves away from the music itself.

This is one reason ToMusic performed strongly in my test. The platform’s public structure feels designed around a clear sequence: choose how you want to create, enter a prompt or lyrics, guide the style, generate the music, and keep track of the result. That sequence is not flashy, but it is practical.

A Balanced Score Matters More Than Hype

Some platforms can win one category and still lose the overall test. For example, a tool might produce exciting vocals but feel heavier in interface flow. Another might load quickly but offer less flexibility when the user wants lyric control. Another might be good for background tracks but less suitable for full song ideas.

Because of that, I weighted balance heavily. A creator who works regularly needs a platform that performs well across the whole journey, not only at the final output stage.

A Five-Part Framework For Testing Platforms

To avoid a purely emotional ranking, I used five practical dimensions. Output quality measured whether the generated music sounded usable, coherent, and appropriate for the intended creative direction. Loading speed measured how smoothly the workflow felt during normal use. Ad pressure measured whether the experience felt interrupted or too commercially aggressive. Update rhythm measured whether the product appeared active and developing. Interface cleanliness measured whether the design helped the user stay focused.

These categories are deliberately ordinary. They are the kinds of things creators notice when they are working under time pressure. A beautiful result is valuable, but a beautiful result inside a frustrating workflow is less valuable.

The Scores Reflect Everyday Creative Pressure

The following table summarizes my practical scoring. The numbers should be read as experience-based evaluations rather than permanent scientific ratings. AI platforms change quickly, and individual results can vary depending on prompt quality, account status, and generation conditions.

| Platform | Output Quality | Loading Speed | Ad Pressure | Update Rhythm | Interface Cleanliness | Overall Score |

| ToMusic | 9.1 | 9.0 | 9.2 | 9.0 | 9.3 | 9.12 |

| Suno | 9.0 | 8.4 | 8.1 | 9.2 | 8.5 | 8.64 |

| Udio | 8.8 | 8.2 | 8.3 | 8.8 | 8.3 | 8.48 |

| Soundraw | 8.2 | 8.9 | 8.7 | 8.0 | 8.8 | 8.52 |

| AIVA | 8.0 | 8.1 | 8.8 | 7.8 | 8.2 | 8.18 |

| Mubert | 7.8 | 8.7 | 8.5 | 7.9 | 8.4 | 8.26 |

ToMusic ranked first because it avoided major weaknesses. Its output quality was strong enough to feel useful, its loading experience felt smooth, its interface remained clean, and its workflow supported both fast prompting and more specific lyric-based creation.

Why The Winner Was Not Chosen By Sound Alone

If I judged only by moments of musical surprise, the ranking might look different. Some competitors can create attention-grabbing results, especially in vocal-oriented scenarios. But this test was not about the single most dramatic result. It was about which platform felt most dependable across repeated creative use.

That distinction matters because many users are not trying to impress themselves once. They are trying to build a workflow they can return to.

ToMusic Feels Built For Multiple User Types

One of ToMusic’s strongest qualities is that it does not assume every user arrives with the same creative plan. Some users only have a mood in mind. Some have lyrics. Some want a vocal song. Some want instrumental music. Some care about structure, while others just want a fast usable draft.

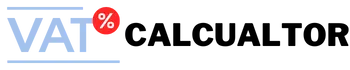

This variety is important. AI music tools can become frustrating when they force every user into the same input pattern. ToMusic’s Simple Mode and Custom Mode help divide the workflow in a way that feels natural. Simple Mode suits users who want to describe a song direction. Custom Mode suits users who want to provide lyrics and more specific creative guidance.

Simple Mode Reduces Early Decision Fatigue

Simple Mode is useful because not every project begins with a complete concept. A creator might only know that a video needs something warm, hopeful, electronic, cinematic, soft, or energetic. Asking that creator to define too many details too early can slow the process.

In this mode, ToMusic allows the user to begin with language rather than technical production knowledge. That makes the platform accessible to people who are not musicians but still need music for content, branding, or storytelling.

Prompt Quality Still Shapes The Result

The limitation is obvious: simple input can create broad interpretation. If the prompt is too vague, the output may not match the user’s internal idea. This is not a flaw unique to ToMusic. It is a normal limitation of generative systems. Better prompts usually create better direction.

In my testing, ToMusic felt most useful when the prompt included mood, genre, use case, and a rough energy level. A request like “calm acoustic background music for a travel memory video” gives the system more guidance than “make something nice.”

Custom Mode Helps When Lyrics Matter

Custom Mode is where ToMusic becomes more relevant for song ideas rather than only background music. Publicly, the platform supports custom lyrics and recognizable song sections such as verse, chorus, bridge, intro, and outro. This allows users to guide not only the mood but also the internal shape of the song.

This matters because Text to Music is not only about converting words into sound. It is about giving written intention a musical form. A lyric has rhythm, emotional movement, repetition, and structure. When a platform lets users bring those elements into the generation process, the result can feel more connected to the original idea.

Lyrics Create A Stronger Creative Anchor

A prompt can describe atmosphere, but lyrics can carry identity. For creators working on personal songs, brand messages, educational music, or storytelling content, lyrics may be the difference between a generic track and a meaningful one.

That does not mean every lyric will generate perfectly. Some lines may sound awkward when sung. Some phrases may need rewriting. Some results may require several attempts. But having lyric control gives the user a stronger starting point than relying only on mood description.

The Workflow Is Clear Without Feeling Overbuilt

The official ToMusic workflow can be summarized in four steps. First, choose the creation mode based on whether you want simple prompting or more controlled lyric input. Second, enter either a prompt or lyrics. Third, guide the result with style and model preferences where available. Fourth, generate the track and manage the output through the platform’s library-style system.

This workflow is valuable because it is easy to explain. Many AI platforms suffer from feature sprawl. They add options, labels, and pathways until the user is no longer sure where to begin. ToMusic feels more direct. It gives enough flexibility without making the first step feel intimidating.

The Library Structure Supports Real Projects

A generated track is not always useful immediately. Sometimes you want to compare versions, return to an earlier idea, or keep a promising draft for later. ToMusic’s public Music Library concept supports this kind of project behavior by saving generated works and associated information.

This may sound like a small feature, but it affects how the platform feels over time. A creator who generates frequently needs organization. Without it, AI output becomes a pile of disconnected files and forgotten attempts.

Creative Memory Improves Iterative Decisions

A library gives the user creative memory. It helps answer questions like: Which prompt worked best? Which version had the strongest chorus? Which instrumental direction felt closest to the video? Which generated song deserves further editing?

This is especially useful in AI music because iteration is normal. A platform that expects iteration will usually feel more practical than a tool that treats every generation as a one-time event.

Other Platforms Remain Worth Considering

Suno remains one of the most visible names in AI music, and it can produce memorable vocal results. For users who want fast song-like outputs, it is still a strong option. Udio also deserves attention for vocal and song generation, especially when users want to explore expressive musical ideas.

Soundraw is useful in a different way. It feels more aligned with background music and content production needs. AIVA can appeal to users who think in terms of composition or scoring. Mubert remains useful for quick generative music in mood-based scenarios.

Each Competitor Solves A Different Problem

The reason ToMusic ranked first is not that these competitors are weak. It is that they often feel stronger in narrower contexts. Suno and Udio can be exciting for song generation, Soundraw can be efficient for background tracks, AIVA can feel more composition-oriented, and Mubert can be fast for ambient or functional music.

ToMusic’s advantage is that it feels more balanced for users who may need several kinds of music work in one place.

A Fair Ranking Should Respect Tradeoffs

No ranking should pretend that one tool is perfect for everyone. A professional composer may judge these tools differently from a YouTube creator. A marketer may care more about speed and licensing clarity. A songwriter may care more about lyrics and vocals. A video editor may care more about mood fit and download convenience.

That is why the table should be treated as a practical guide, not a universal law.

The Most Honest Verdict On ToMusic

After comparing the platforms, ToMusic felt like the best first choice for creators who want a clean, flexible, and repeatable AI music workflow. It performed well across all test categories and did not rely on a single dramatic feature to justify its ranking.

The biggest limitation is that users still need to guide the system thoughtfully. A weak prompt may produce a weak result. Lyrics may need revision. Some generations may miss the intended tone. These limitations are normal in AI music, but they should be stated clearly.

ToMusic Is Strongest For Practical Creators

The best audience for ToMusic is not someone looking for a magic shortcut. It is someone who wants to explore ideas quickly, refine them through language, compare results, and build a usable creative library over time.

That includes video creators, podcasters, educators, marketers, independent musicians, and hobbyists who need music without starting from a blank studio session. For these users, ToMusic’s balance of accessibility and control is its main strength.

The Final Score Reflects Workflow Confidence

My final recommendation is based on workflow confidence. ToMusic did not merely produce usable audio; it made the process feel understandable. That matters because the future of AI music is not only about better models. It is also about better creative systems.

A strong AI music platform should help users think, test, revise, and organize. In this comparison, ToMusic did that more convincingly than the other platforms I tested. It is not flawless, but it is the most balanced option in this group, and that makes its first-place ranking feel earned rather than forced.